The Asia Student Supercomputer Challenge (ASC) is the closest thing to true stock car racing that you’ll find on the Student Cluster Competition international circuit.

The Asia Student Supercomputer Challenge (ASC) is the closest thing to true stock car racing that you’ll find on the Student Cluster Competition international circuit.

At the ASC, you drive nodes that are supplied by major sponsor Inspur, with only limited configurability.

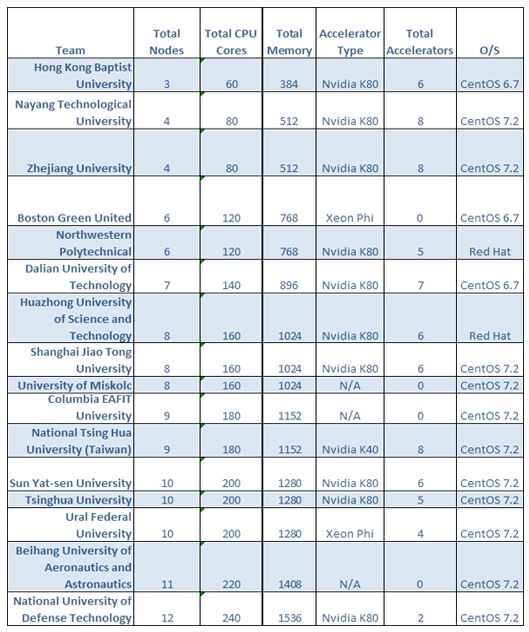

Each of the teams below are using nearly identical basic nodes, each equipped with dual 10-core Xeon processors, and 128 GB of RAM (although one team took out some RAM to save power), and Mellanox Infiniband FDR interconnects.

But there are some big differences, as you can see on the chart below. Some teams took a “small ball” approach, building out systems with low numbers of nodes, but with high numbers of accelerators.

Sometimes this configuration is a signal that a team is going after the LINPACK crown, but this isn’t always the case. It can also mean that the team has confidence in their ability to use accelerators on most of the applications and tune their way to glory on the others.

On the other hand, it can also mean plain bad luck – like in the case of Hong Kong Baptist, who just couldn’t get their fourth node to work with MPI.

It’s interesting how the configurations sort themselves into tranches. Five teams have six or fewer nodes, six teams have seven to nine nodes, and five teams have ten to twelve nodes. Given the 3,000 watt power cap, you’d think that the small cluster teams would be able to run full out, but this isn’t necessarily true. Even these small systems have to be closely managed and, quite often, throttled down in order to stay under the cap.

You would also think that the big node count guys would have a definite advantage given their sheer size of their hardware, but that ain’t necessarily so either – they tend to find that they have to keep much of their cluster at idle during application runs in order to stay under the 3k watt limit.

Posted In: Latest News, ASC 2016 Wuhan

Tagged: NVIDIA, Inspur, Mellanox, Asia Student Supercomputer Challenge, ASC16, Configurations, ASC 2016