Excitement reigned at the Asia Student Supercomputer Challenge as Zhejiang University set a new student LINPACK record with 12.03 TFlop/s.

Excitement reigned at the Asia Student Supercomputer Challenge as Zhejiang University set a new student LINPACK record with 12.03 TFlop/s.

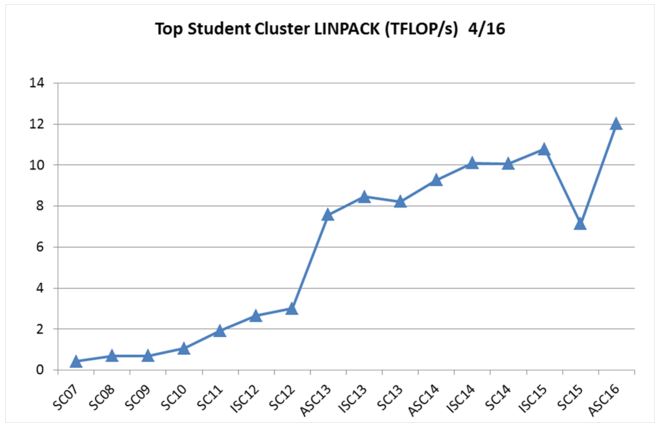

With this result, they eclipsed the previous record of 10.78 TFlop/s set by Jamia Millia Islamia University (JMI) at last year’s ISC’15 competition.

Here’s a historical chart of past student cluster competition LINPACK records…

Setting a new high-water mark in student clustering is great, but what’s really interesting is how they did it.

For the last couple of years, student cluster competition LINPACK records have been set by four-node systems with eight NVIDIA K80 accelerators, plus sophisticated liquid cooling. This has been the case ever since University of Edinburgh first topped the 10 TFlop record with their 10.10 mark at ISC’14. JMI came along the next year, with essentially the same hardware configuration, and pushed the record up a bit more to 10.78 TFlops.

Guts = Glory

The kids from Zhejiang went about it a different way: they used old-fashioned engineering plus dare-devil gutsiness to construct their system and make their run at LINPACK glory. First they figured out that their fans were consuming five to 10 percent of the total electrical load on their four-node, eight-K80 cluster.

With that in mind, they looked at ways to minimize fan power. They determined that their existing rack structure wasn’t utilizing airflow as well as it could, so they rearranged their rack to make it more windy.

They then removed some fans — which is not for the faint of heart — and installed software to let them dynamically control the speed of the remaining fans. This gave them more watts to dedicate for computation. The final piece of the puzzle was some judicious overclocking of their K80 video cards.

The end result was a LINPACK score of 12.03 TFlops, which handily beat last year’s ISC15 record of 10.78.

I really have to take my hat off to these kids. Other teams have tried to reduce power consumption by using liquid cooling and other means, but no team has ever had the guts to try overclocking their CPUs or GPUs.

I can kind of see why they wouldn’t try to overclock their CPUs. Most motherboards and BIOSes aren’t overclocking-friendly when it comes to processors. However, overclocking GPUs is done all the time in the enthusiast world and can be carried out relatively risk-free. Like most computing components, if GPUs get too hot, they’ll shut down automatically.

Great job, Team Zhejiang! Maybe you’ll inspire future student cluster warriors to take more risk. Enjoy the 10,000 RMB ($1,500, £1,000) award!

Posted In: Latest News, ASC 2016 Wuhan

Tagged: supercomputing, HPC, LINPACK, Asia Student Supercomputer Challenge, Zhejiang University, ASC16, Results, ASC 2016